What is Google Search Console and what does it do?

Google Search Console, now known as Google Webmaster Tools, is a free to use interface provided by Google to ensure your websites can be crawled and indexed correctly by Googlebot.

It offers a range of tools to identify potential issues with your site, typically broken pages that you should take steps to fix, discover malware attacks on your site and also allow you to track your site’s performance in Google, in terms of clicks to your site and the number of impressions plus an average position for your keyword phrases.

There is a new Search Console but it is still in beta mode and does not offer the full suite of tools that the present Console does, so for the purposes of this blog we will stick with the “old” version.

How to Set up Google Search Console

Setting up Google Search Console is a fairly simple task. You will need to add the following versions of your site:

http://yourdomain.com

https://yourdomain.com

http://www.yourdomain.com

https://www.yourdomain.com

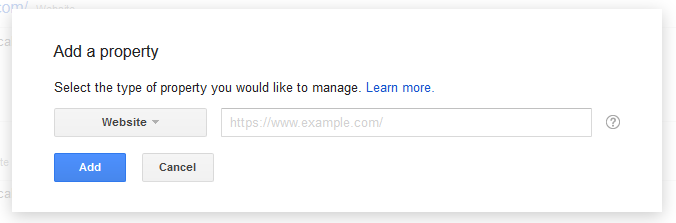

When you log in you should see a red “Add A Property” button:

![]()

Keep the dropdown to “Website” and enter in the full URL of your website domain and then press the “Add” button.

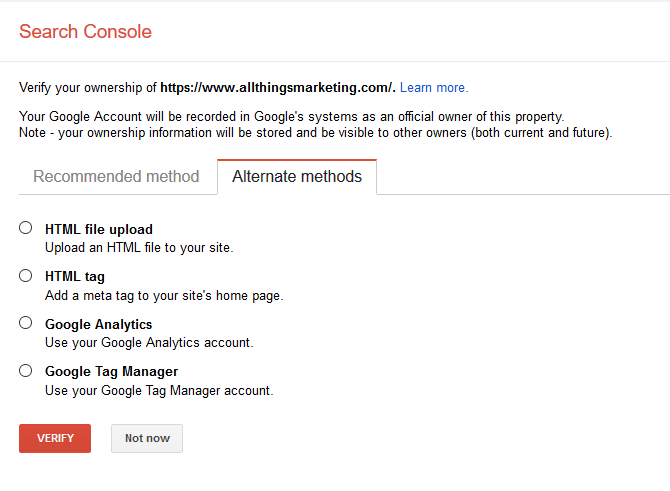

You will then need to verify your website – I recommend you choose “Alternate methods”:

Do note if you choose to use Google Analytics you will need to be running the “asyncrohonous tracking code” – more information here: https://support.google.com/analytics/answer/1008080

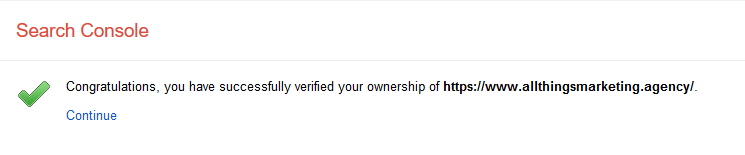

If you are successful you should see a success message:

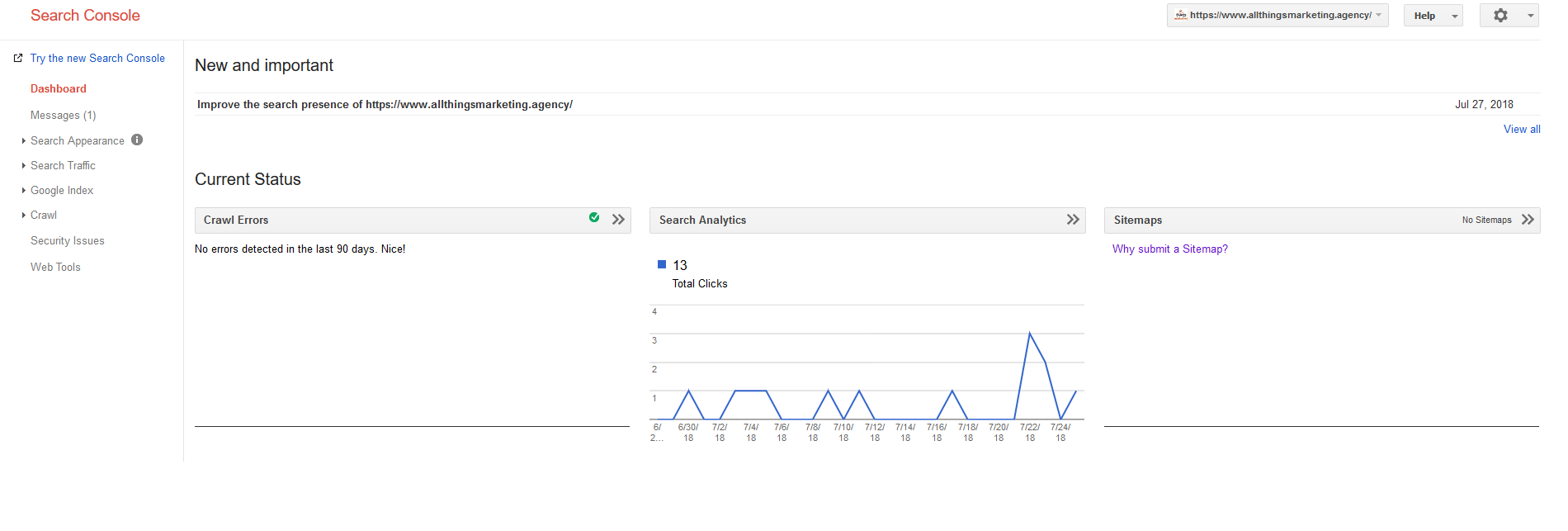

From this dashboard you will be able to submit a XML sitemap, review your Search Analytics performance and also view the number of errors on your website detected by Googlebot over the past 90 days.

How to use Google Search Console

To get the most from Google Search Console requires getting your hands dirty!

You’ll need to move beyond the dashboard and the following menu items are generally the most useful tools in my opinion:

- Search Appearance > HTML Improvements

- Search Traffic > Search Analytics

- Crawl > Fetch as Google

- Crawl > robots.txt Tester

- Crawl > Sitemaps

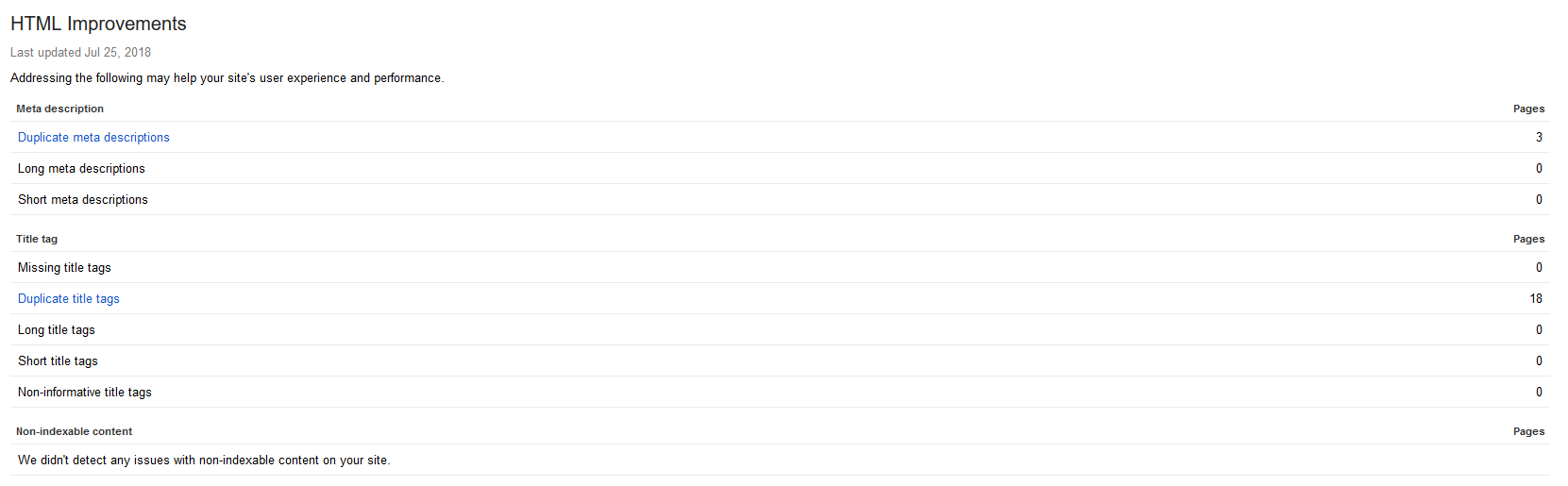

HTML Improvements

Here Google indicates if your meta data needs improving – things like duplicate meta descriptions, missing title tags, non-indexable content and more can then be fixed by your web dev team.

I would prefer to see Google improve this tool and help show if it considers your content to be unique enough, or, if it feels it is a near duplication of content elsewhere.

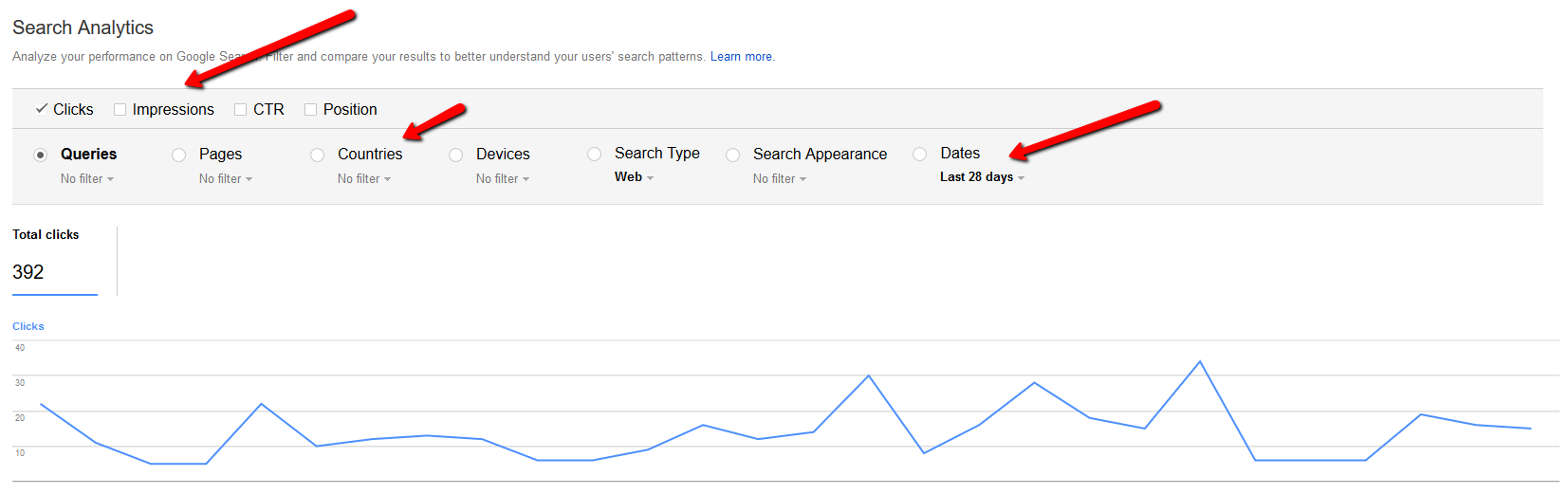

Search Analytics

This is probably the most used tool in GSC and one I recommend you keep an eye on a weekly basis.

Select “Clicks”, “Impressions” and “Position” and this brings up a list of your keywords within a specified date range (upto 90 days in this version of GSC).

You can then review your keywords, the clicks they get from the number of impressions. The Position that Google uses is fairly inaccurate as it is an average Position, not a specific. I suggest filtering your keywords by Impressions and then look for phrases with a high number of impressions, little clicks and an average position of 10 to 20. Google Search Console can also show you which of your web pages are generating lots of impressions with little clicks so it display which pages might benefit from additional optimisation of content.

I would suggest for record keeping you download these results once a month so you can track back over time. Google has increased the time frame from 90 days to 16 months in the “new” version.

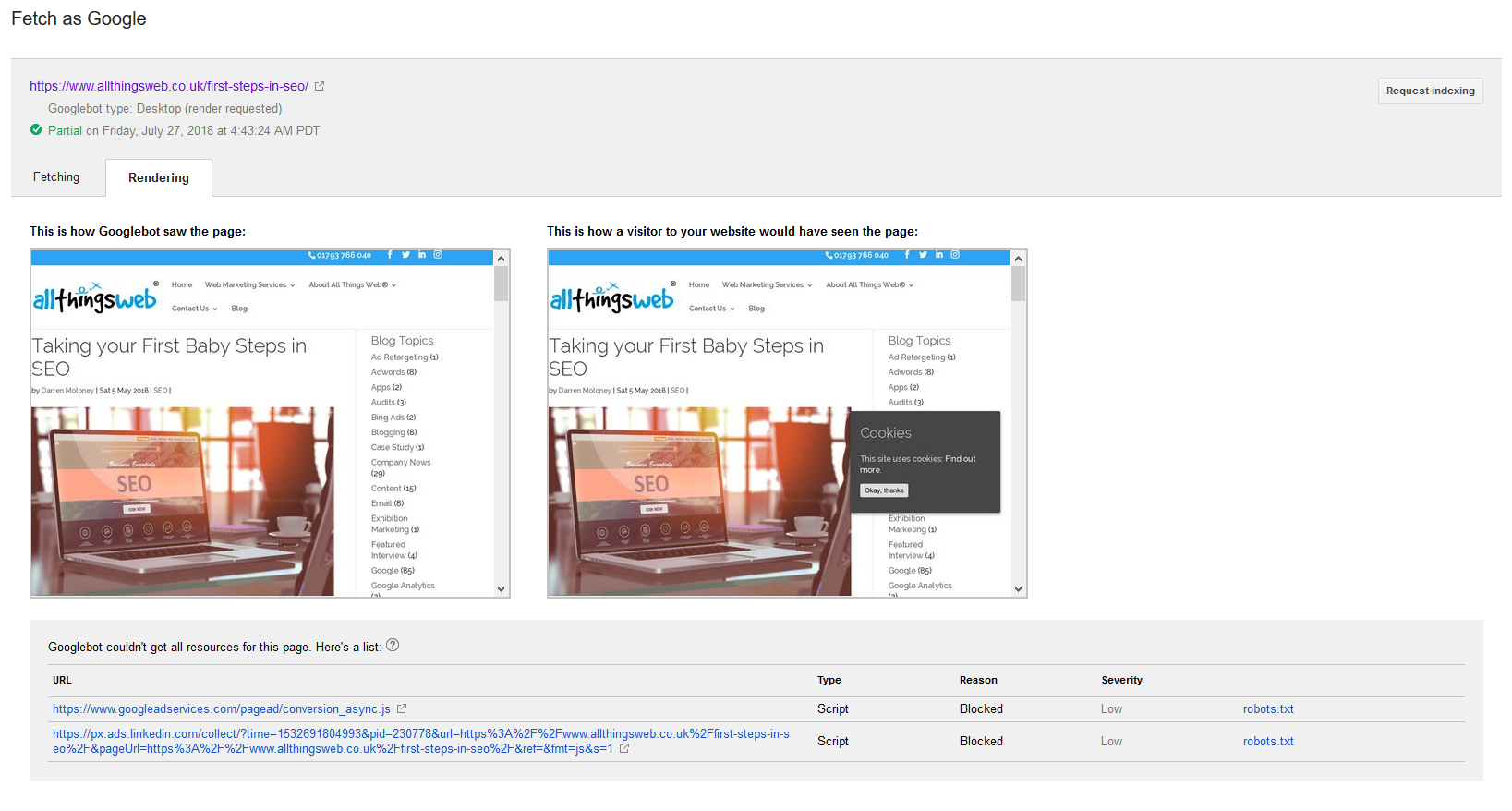

Fetch as Google

If you not seeing one of your pages show in Google’s results, you can instruct Googlebot to “Fetch and Render” the page. This can then show you if Googlebot is having issues crawling your page allowing you to make any necessary fixes.

I have used one of our website pages as an example (though it ranks in 3rd place on Google for “Baby SEO Steps” ):

There are a couple of issues causing only a “Partial” render – one by googleadservices and linkedin, if we were to remove these issues we would get a “Full” render indicated. In the top right there is a grey button allowing a “Request indexing” to take place. The page used here is already indexed in Google so there is no need to press this.

If you are having a page fail to show in Google then I would make the Fetch as Google your first port of call to resolve this problem.

I’d also recommend when you create new content on your site you use this tool to ensure your new page is crawled and indexed promptly.

Robots.txt Tester

A robots.txt file is generally used to block Googlebot (and other bots) from certain areas of your website to prevent crawling (and therefore likely) indexation taking place. Sometimes mistakes can happen and you can block Googlebot from parts of your website you never intended to block them from. This testing tool can ensure your robots.txt file is in place and working correctly.

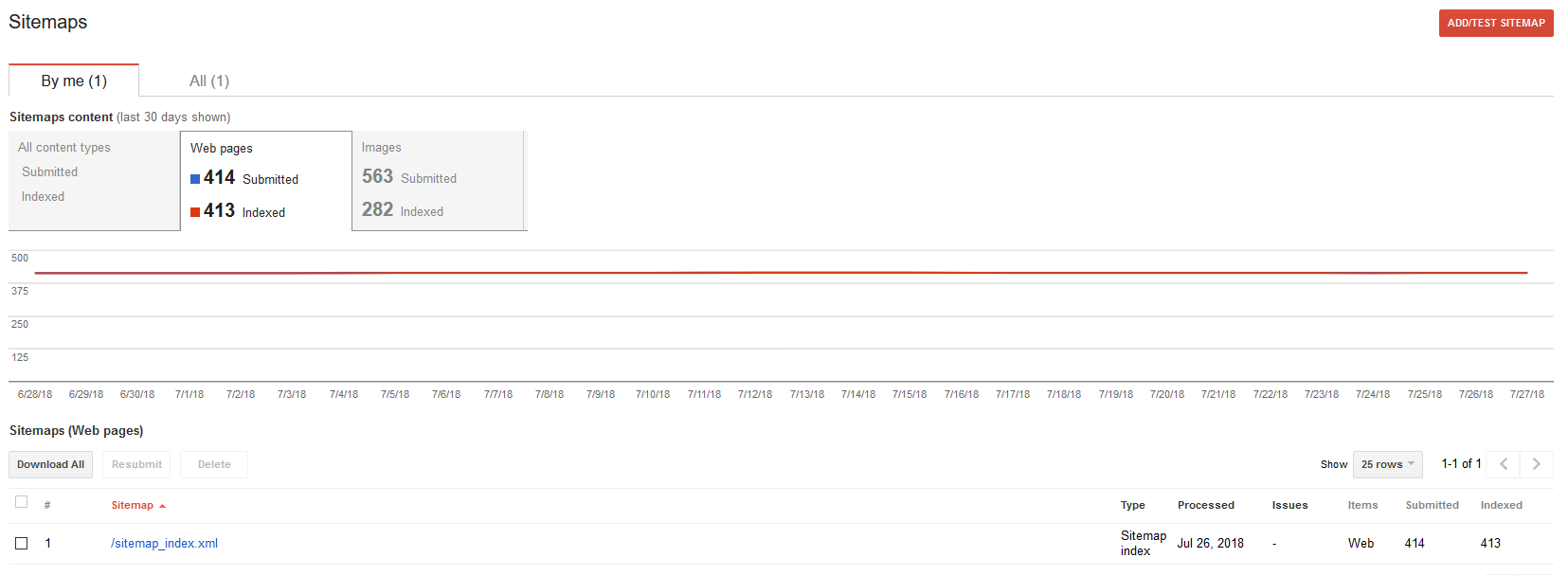

XML Sitemaps

To enable Googlebot a more efficient crawl of your site, I would recommend serving up an XML sitemap so that this can allow Google to find new pages on your site.

If you want to quickly create an XML sitemap I’d recommend this tool:

It offers a free version that is limited to upto 500 pages, so would be useful for most smaller websites. There is a paid version that offers many more features and is something I would recommend you invest in for a fee of $3.49 it really is a no brainer.

Getting the Most from Google Search Console

To get the most from Google Search Console really requires you to login daily or weekly and regularly review any issues that are shown.

Typically, the common issues are indexation and broken pages, so taking steps to fix these is important. Google Search Console can help you ensure you offer up a clean website with good content and this can help towards improving your SEO.

How much does Google Search Console cost?

To Google’s credit this tool is completely free of charge to use and yes Google we’d like you to keep it this way!

Further Reading on Google Search Console:

I recommend you read up more on how to get the most from GSC as I haven’t covered it fully here, rather just giving an overview with some initial basic setup instructions.

https://backlinko.com/google-search-console

https://support.google.com/webmasters/?hl=en#topic=3309469

https://searchengineland.com/library/google/google-search-console

Darren Moloney is Founder and Technical Director at All Things Web® https://www.allthingsweb.co.uk/ and is always willing to talk about how your business can make better use of the web, search, and digital marketing. Email darren@allthingsweb.co.uk or Phone 01793 766040